A Deep Dive Into Ai Large Models Parameters Tokens Context Windows

A Deep Dive Into Ai Large Models Parameters Tokens Context Windows Parameters, tokens, context windows, context length, and temperature are crucial concepts within ai large models, determining their complexity, performance, and capabilities. In large language models (llms), understanding the concepts of tokens and context windows is essential to comprehend how these models process and generate language.

A Deep Dive Into Ai Large Models Parameters Tokens Context Windows What is a context window for large language models? learn about ai context windows. understand how token limits work, why attention costs scale, and how to overcome memory constraints with rag. Context windows determine how much code ai can process at once—choose wisely to balance capability with cost. before diving deeper, understand how different models compare with our comprehensive guides on gpt 5 for coding, claude 4 sonnet capabilities, and gemini 2.5 analysis. In 2025, we've witnessed a remarkable evolution as context windows have expanded from mere thousands to millions of tokens, fundamentally transforming the landscape of artificial intelligence applications. In this guide, we will unpack what context windows are, why they matter, the engineering magic behind extending them, and practical strategies to get the most out of your model's memory.

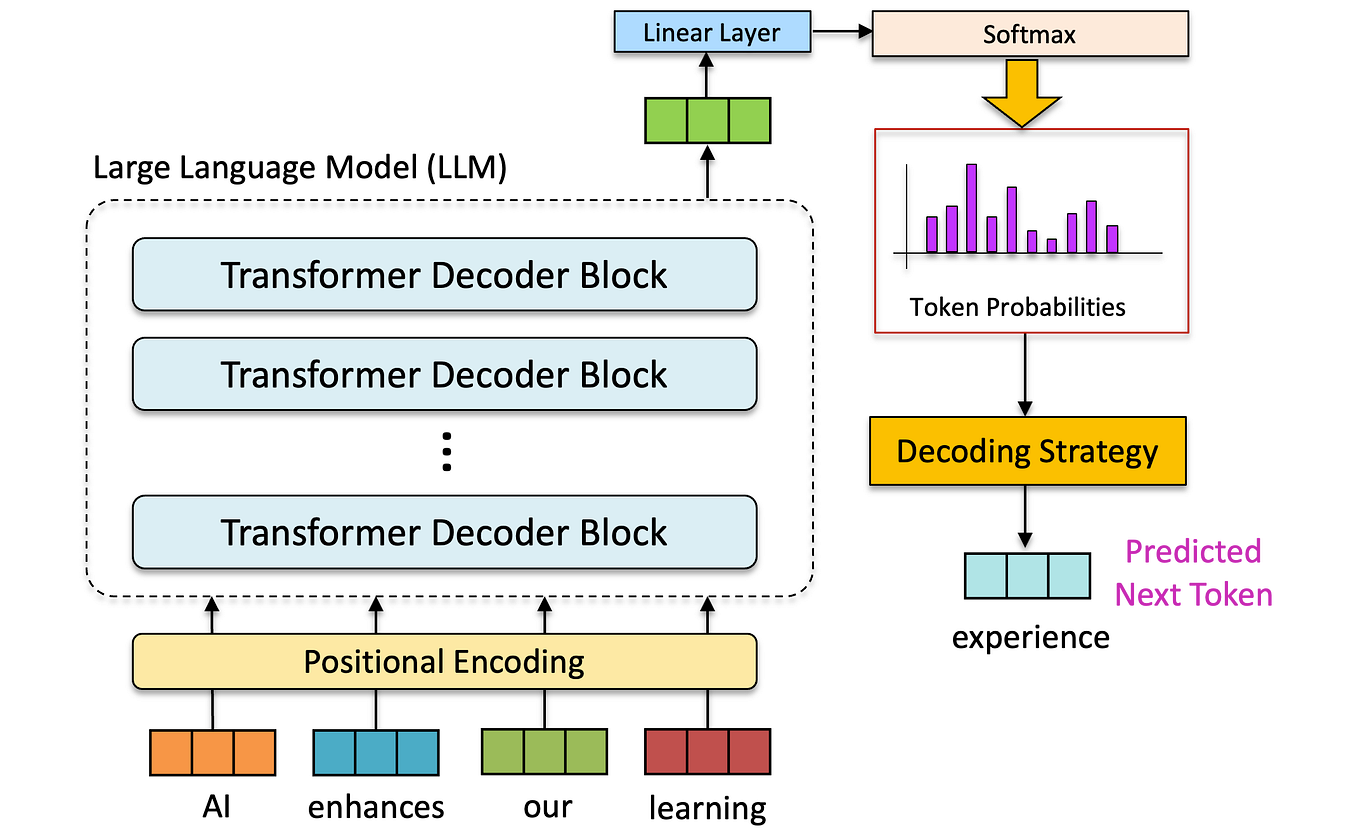

A Deep Dive Into Ai Large Models Parameters Tokens Context Windows In 2025, we've witnessed a remarkable evolution as context windows have expanded from mere thousands to millions of tokens, fundamentally transforming the landscape of artificial intelligence applications. In this guide, we will unpack what context windows are, why they matter, the engineering magic behind extending them, and practical strategies to get the most out of your model's memory. Discover the inner workings of large language models. explore key components including llm tokens, parameters, and weights. At this point, you may be wondering why some models have larger context windows than others. to understand this, let’s first review how the attention mechanism works in the figure below; if you aren’t familiar with the details of attention, this is covered in detail in my previous article. For ai leaders, product managers, and engineers, understanding how context windows actually work—and why they can’t scale indefinitely—is critical to building real world ai systems. this article breaks down: how context windows are defined by the math of attention. why scaling them hits hard limits. engineering innovations that extend them. In the rapidly evolving field of artificial intelligence (ai), large language models (llms) have emerged as a cornerstone of natural language processing (nlp) technologies. at the heart.

A Deep Dive Into Ai Large Models Parameters Tokens Context Windows Discover the inner workings of large language models. explore key components including llm tokens, parameters, and weights. At this point, you may be wondering why some models have larger context windows than others. to understand this, let’s first review how the attention mechanism works in the figure below; if you aren’t familiar with the details of attention, this is covered in detail in my previous article. For ai leaders, product managers, and engineers, understanding how context windows actually work—and why they can’t scale indefinitely—is critical to building real world ai systems. this article breaks down: how context windows are defined by the math of attention. why scaling them hits hard limits. engineering innovations that extend them. In the rapidly evolving field of artificial intelligence (ai), large language models (llms) have emerged as a cornerstone of natural language processing (nlp) technologies. at the heart.

Comments are closed.