Ai Networking Fabrics Explained %f0%9f%94%a5 Gpu Clusters Rdma Infiniband Data Center Architecture

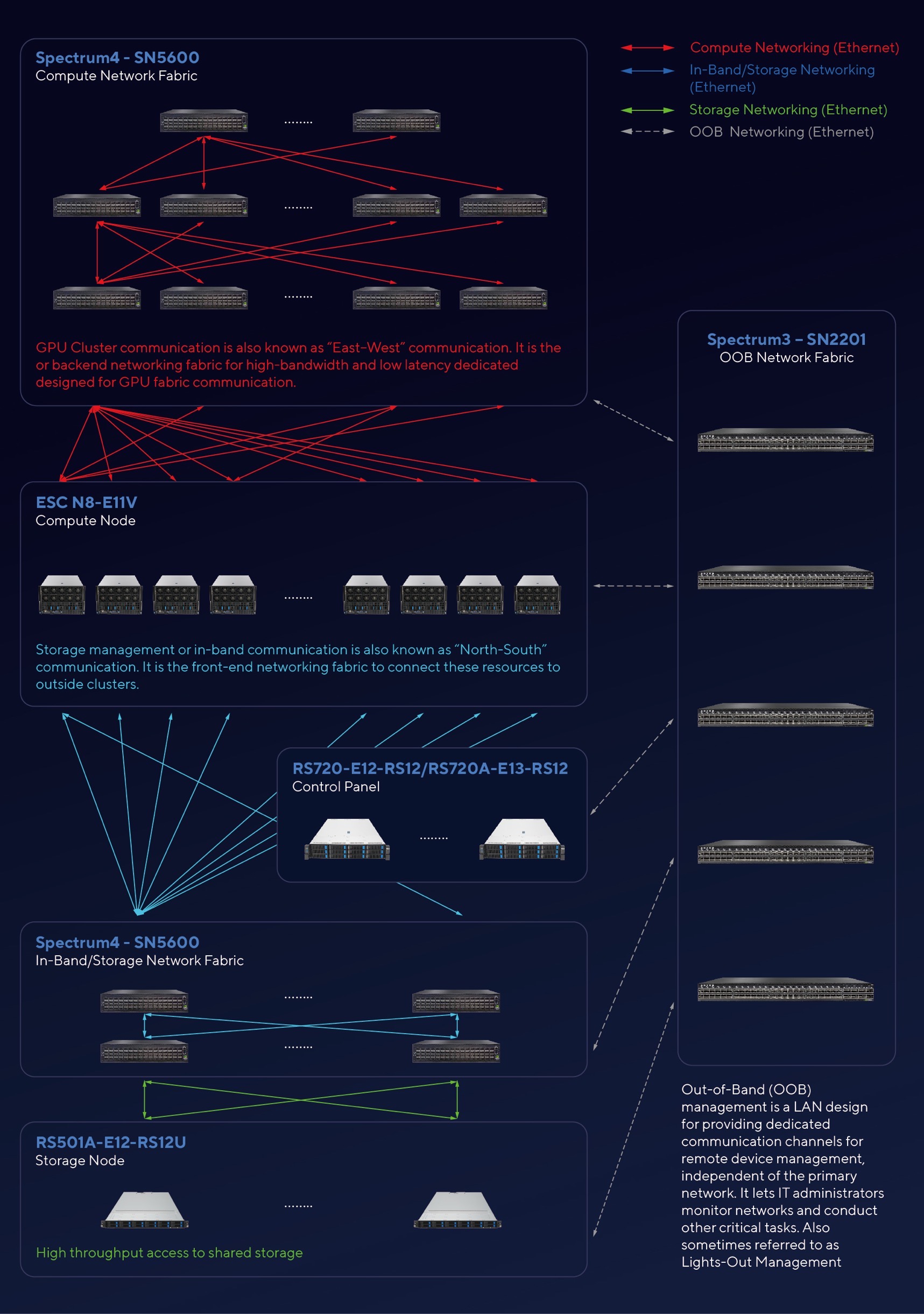

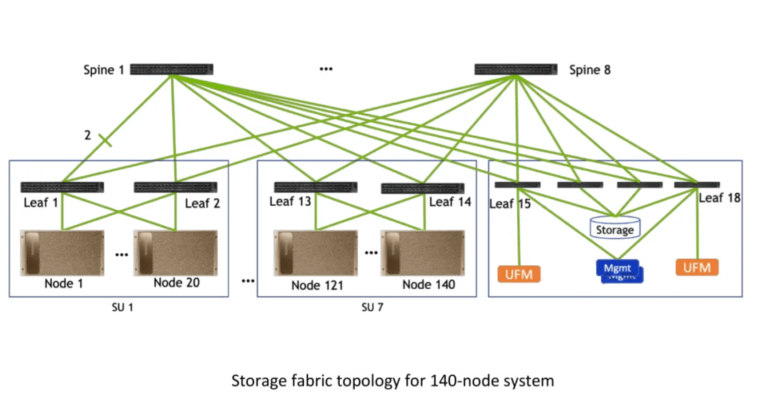

Doubling Network File System Performance With Rdma Enabled Networking Per figure 1, back end network fabrics support both gpu training clusters and ai storage systems. implemented as separate back end fabrics, compute training clusters and storage networks both provide high performance, low latency networking for each service respectively. To design an ai fabric correctly, you must first understand why gpus need to communicate at all, and what the communication patterns look like at the protocol level. modern large ai model training uses three fundamental parallelism strategies, each generating distinct network traffic patterns:.

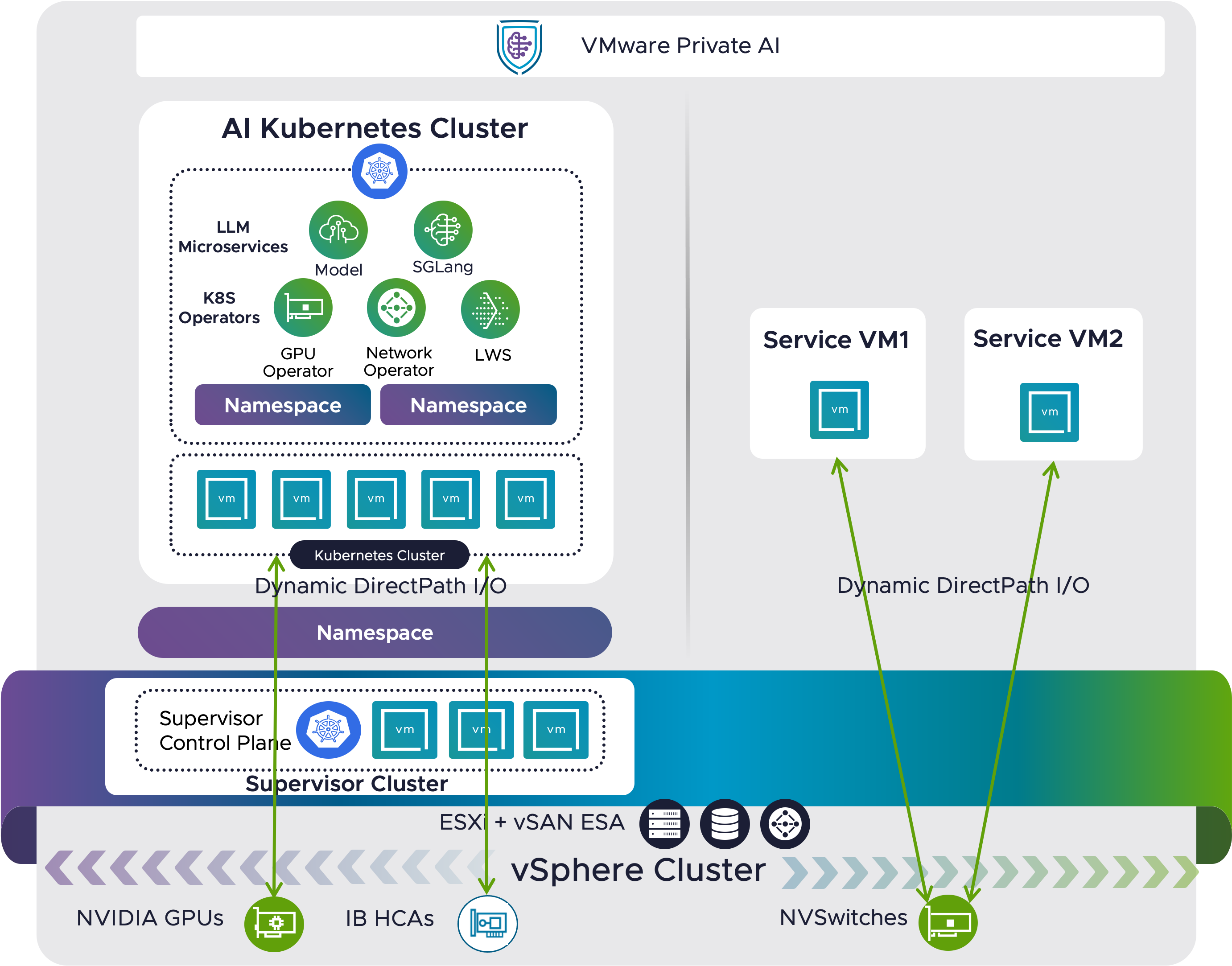

Deploy Distributed Llm Inference With Gpudirect Rdma Over Infiniband In Backend networking in ai clusters refers to the interconnect infrastructure that facilitates internal communication between ai accelerators (such as gpus) within a data center (scale out) or between datacentes (scale across). This document is intended to provide a best practice blueprint for building a modern network environment that will allow ai ml workloads to run at their best using shipped hardware and software features. In this guide, we are talking about scale out fabrics (also known as "back end" or "east west" fabrics), which interconnect dozens of compute nodes with hundreds or even thousands of gpus in order to process ai workloads. Building a fabric for ai training is now an architectural discipline of its own. the industry is transitioning from ad hoc performance tuning to systemic co design of topology, protocol, and.

Designing Ai Clusters Network Infrastructure For Efficient Data Center In this guide, we are talking about scale out fabrics (also known as "back end" or "east west" fabrics), which interconnect dozens of compute nodes with hundreds or even thousands of gpus in order to process ai workloads. Building a fabric for ai training is now an architectural discipline of its own. the industry is transitioning from ad hoc performance tuning to systemic co design of topology, protocol, and. The episode shed light on the challenges and solutions in automating networks for ai workloads, prompting me to explore the architectural distinctions and considerations in ai cluster networks. An overview of the networking challenges and design considerations when building fabrics for large scale ai ml training clusters, from rdma to rail optimized topologies. The purpose of this introductory paper is to go under the hood and bring you a closer look at the common ai networking protocols today and how they operate in an ai cluster. How does ai fabric differ from traditional data center networks? unlike traditional networks built for general it traffic, ai fabric is optimized for ai workloads, offering lossless transmission, real time synchronization, and superior throughput across gpu clusters.

Designing Ai Clusters Network Infrastructure For Efficient Data Center The episode shed light on the challenges and solutions in automating networks for ai workloads, prompting me to explore the architectural distinctions and considerations in ai cluster networks. An overview of the networking challenges and design considerations when building fabrics for large scale ai ml training clusters, from rdma to rail optimized topologies. The purpose of this introductory paper is to go under the hood and bring you a closer look at the common ai networking protocols today and how they operate in an ai cluster. How does ai fabric differ from traditional data center networks? unlike traditional networks built for general it traffic, ai fabric is optimized for ai workloads, offering lossless transmission, real time synchronization, and superior throughput across gpu clusters.

Hardware Configuration And Network Design For Large Scale Gpu Clusters The purpose of this introductory paper is to go under the hood and bring you a closer look at the common ai networking protocols today and how they operate in an ai cluster. How does ai fabric differ from traditional data center networks? unlike traditional networks built for general it traffic, ai fabric is optimized for ai workloads, offering lossless transmission, real time synchronization, and superior throughput across gpu clusters.

Comments are closed.