Can Cursors New Agents Mode Run A Local Llm

Llm Agent Overview Pdf Computer Programming Computing Cursor 2's new model composer has landed, but does it play nice with agent mode using a local llm? find out how to set it up and run locally. Cursor will route the request through ngrok to your local llm running in lm studio. this setup allows you to enjoy cursor’s powerful coding experience while keeping inference fully local.

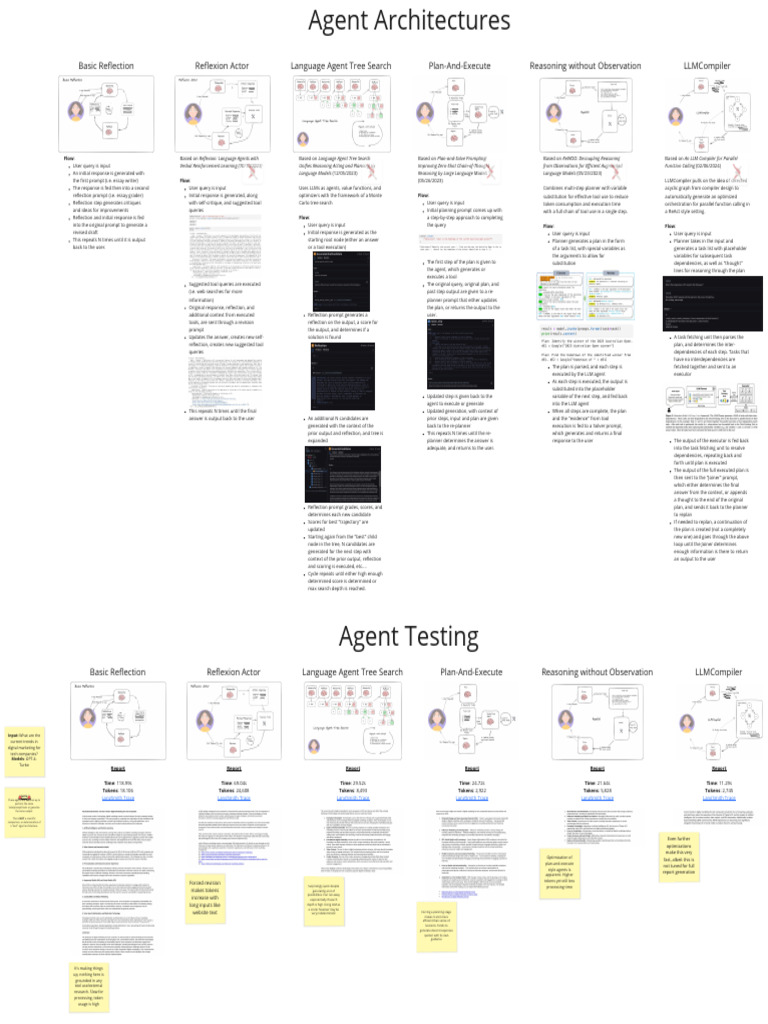

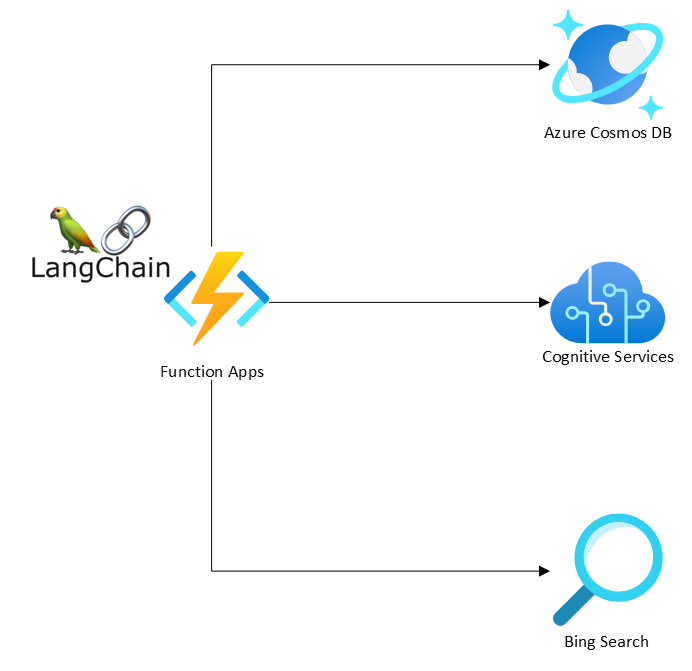

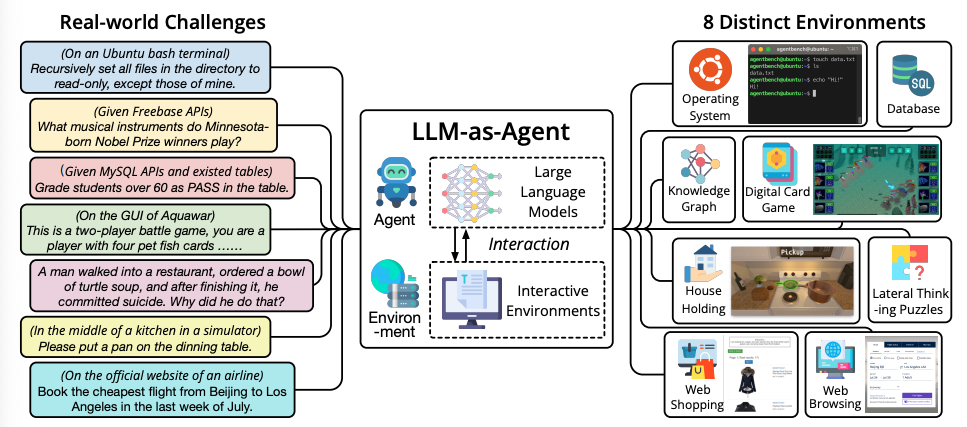

Agents Workshop For Llm Agents What if i told you that you could run state of the art language models locally and connect them directly to cursor ide for free? that’s exactly what we’re going to do today. but more. The new cursor interface brings clarity to the work agents produce, pulling you up to a higher level of abstraction, with the ability to dig deeper when you want. it's faster, cleaner, and more powerful, with a multi repo layout, seamless handoff between local and cloud agents, and the option to switch back to the cursor ide at any time. Most tutorials make local llm integration with cursor sound impossible. after countless late night coding sessions and troubleshooting, i can tell you it’s doable – but with some gotchas that’ll save you hours of frustration. 8. start using cursor with your local llm in cursor, open a file or start a new code file. begin typing or prompt your ai with a query. (use openai turbo model to trigger api) the request will be routed to your local llm through lm studio, exposed via ngrok.

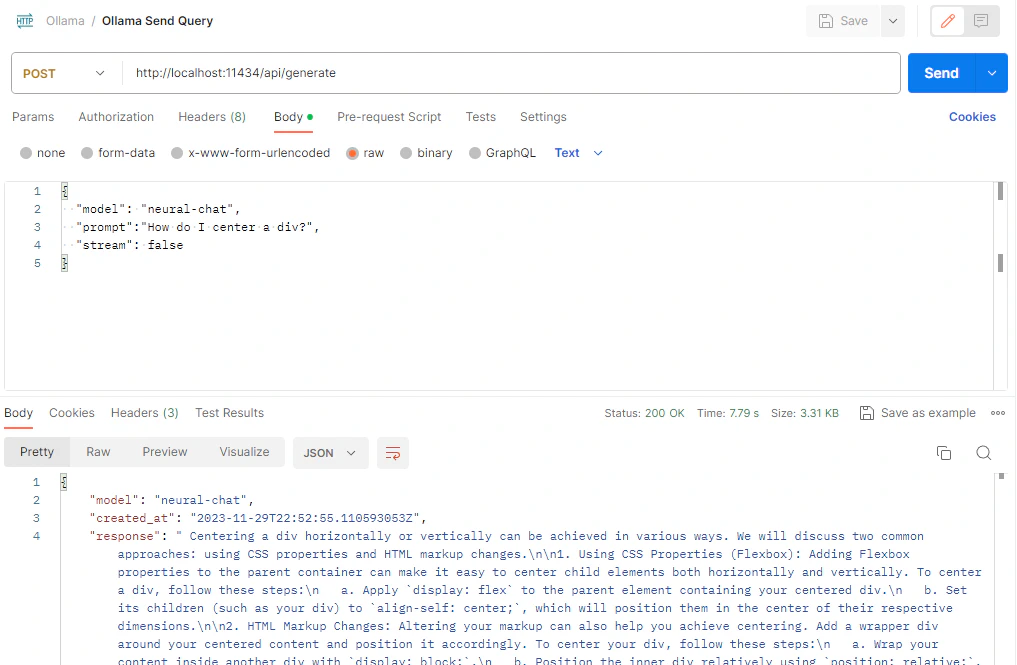

How To Run Llm Local In Windows Most tutorials make local llm integration with cursor sound impossible. after countless late night coding sessions and troubleshooting, i can tell you it’s doable – but with some gotchas that’ll save you hours of frustration. 8. start using cursor with your local llm in cursor, open a file or start a new code file. begin typing or prompt your ai with a query. (use openai turbo model to trigger api) the request will be routed to your local llm through lm studio, exposed via ngrok. Running large language models (llms) locally are becoming increasingly accessible, and integrating them directly into your ide workflow can dramatically boost productivity if you got some good hardware already. this guide demonstrates how to run llms locally using ollama and connect with cursor ide. 1. setting up ollama ollama local llm deployment. Open a new chat in cursor and type: you should receive a response without errors. note toggle the model you use in the chat section of cursor, in my case its deepseek r1. congratulations, you’re now running cursor ai entirely free! run cursor ai locally on windows using lm studio. (untested on linux or macos.). Today i configured my code editors, vs code and cursor, to use a local llm rather than copilot or cursor chat. i have an m1 macbook pro running ventura 13.7.1. so far, the models work well though a bit slower than that of their cloud counterparts. If you want to use local llms with cursor, this approach moves you forward. it’s not flawless, but it lets you customize your ai coding setup while cutting costs.

Llm Agents The Complete Guide Running large language models (llms) locally are becoming increasingly accessible, and integrating them directly into your ide workflow can dramatically boost productivity if you got some good hardware already. this guide demonstrates how to run llms locally using ollama and connect with cursor ide. 1. setting up ollama ollama local llm deployment. Open a new chat in cursor and type: you should receive a response without errors. note toggle the model you use in the chat section of cursor, in my case its deepseek r1. congratulations, you’re now running cursor ai entirely free! run cursor ai locally on windows using lm studio. (untested on linux or macos.). Today i configured my code editors, vs code and cursor, to use a local llm rather than copilot or cursor chat. i have an m1 macbook pro running ventura 13.7.1. so far, the models work well though a bit slower than that of their cloud counterparts. If you want to use local llms with cursor, this approach moves you forward. it’s not flawless, but it lets you customize your ai coding setup while cutting costs.

Llm Agents Prompt Engineering Guide Today i configured my code editors, vs code and cursor, to use a local llm rather than copilot or cursor chat. i have an m1 macbook pro running ventura 13.7.1. so far, the models work well though a bit slower than that of their cloud counterparts. If you want to use local llms with cursor, this approach moves you forward. it’s not flawless, but it lets you customize your ai coding setup while cutting costs.

Comments are closed.