Claude Code With Anthropic Api Compatibility Ollama Blog

New Capabilities For Building Agents On The Anthropic Api Claude Ollama v0.14.0 and later are now compatible with the anthropic messages api, making it possible to use tools like claude code with open source models. run claude code with local models on your machine, or connect to cloud models through ollama . Explore how to deploy claude code locally with ollama in 2026 for faster, cost‑effective enterprise code generation. executive snapshot. the latest ollama release (v1.6) brings native support for anthropic’s claude 4 code model, enabling on‑prem inference without cloud latency.

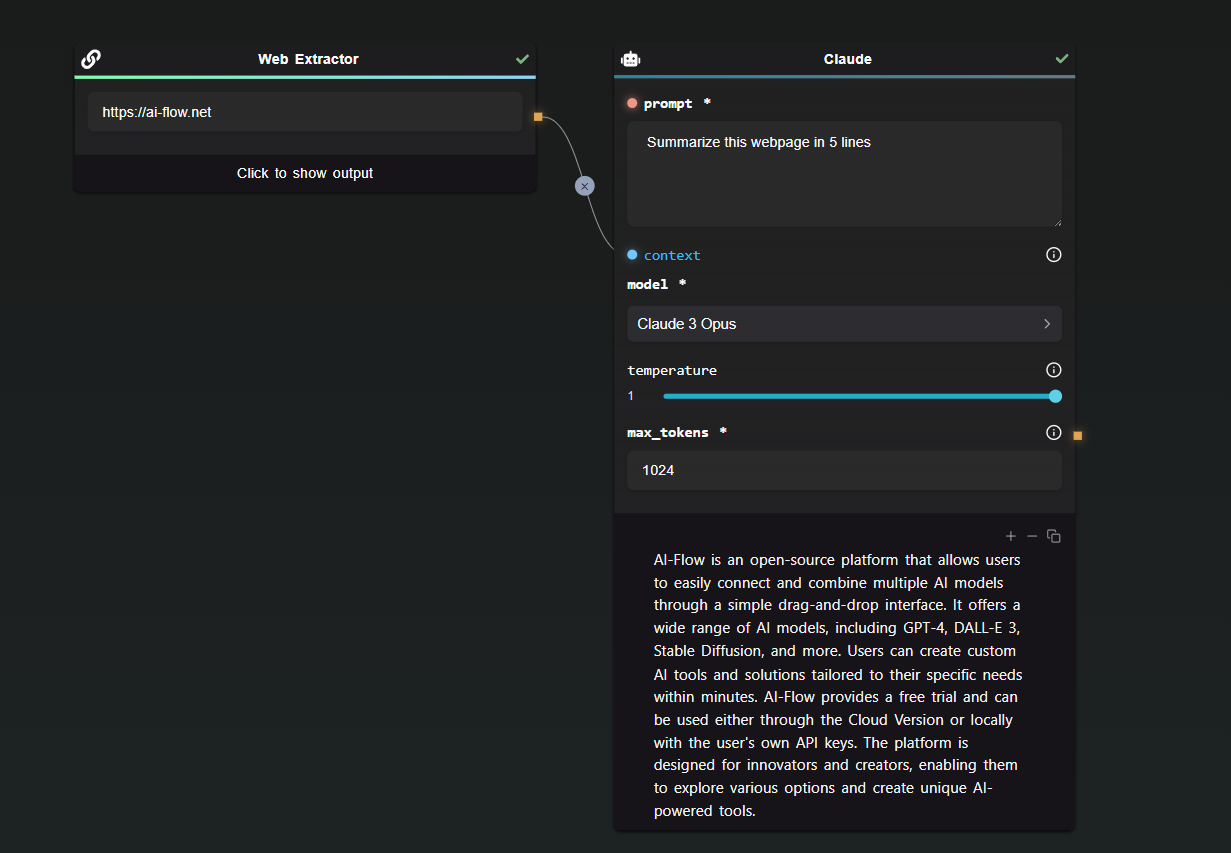

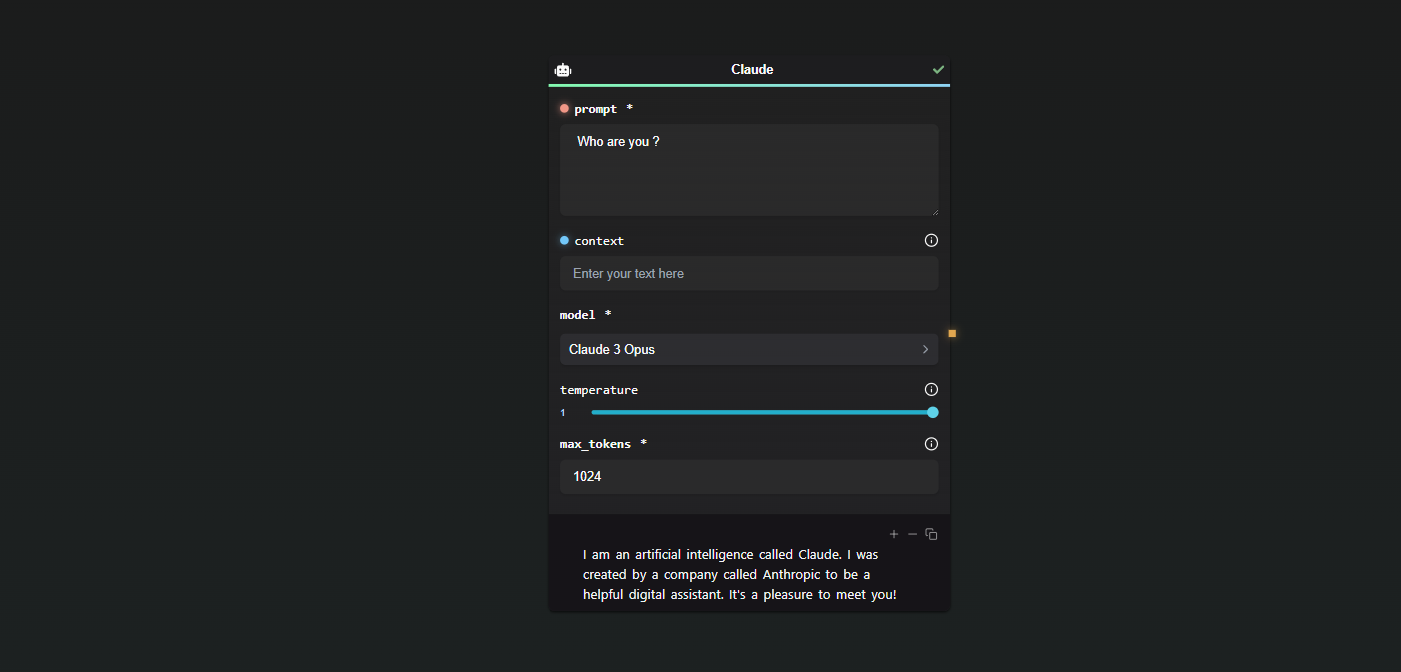

Access Claude 3 From Anthropic Api Through Ai Flow Ai Flow Blog This guide covers the full setup: installing ollama and claude code, choosing a model that fits 16 gb of ram, connecting the pieces, and understanding the real tradeoffs. In this tutorial, we’ll wire up claude code to ollama so that claude’s agentic, terminal first workflow runs on open source models. we will install claude code, start ollama locally,. Configure claude code to use local llm models via olla's anthropic messages api translator. load balancing, failover, streaming, and model unification for ollama, lm studio, vllm, and other openai compatible backends. You can now use anthropic’s claude code with open source models through ollama — combining the best of agentic coding and local ai.

Access Claude 3 From Anthropic Api Through Ai Flow Ai Flow Blog Configure claude code to use local llm models via olla's anthropic messages api translator. load balancing, failover, streaming, and model unification for ollama, lm studio, vllm, and other openai compatible backends. You can now use anthropic’s claude code with open source models through ollama — combining the best of agentic coding and local ai. In january 2026, ollama added support for the anthropic messages api, enabling claude code to connect directly to any ollama model. this tutorial explains how to install claude code, pull and run local models using ollama, and configure your environment for a seamless local coding experience. Here is the free way to use claude code using ollama. well, the wait is over! ollama just dropped a massive update that changes the game. they’ve added full anthropic api compatibility, which means you can now run claude code using your own local models! 🥳. what exactly is this "claude code" magic?. Anthropic's agentic coding tool with subagent support claude code is anthropic’s agentic coding tool that can read, modify, and execute code in your working directory. through ollama’s anthropic compatible api, you can use open models like glm 4.7, qwen3 coder, and gpt oss with claude code. Just a couple of weeks ago, they announced that their latest ollama versions are now compatible with the anthropic messages api. if that statement is a little underwhelming for you, what it means in practice is that you can now run claude code with local models using ollama, making it completely free to use.

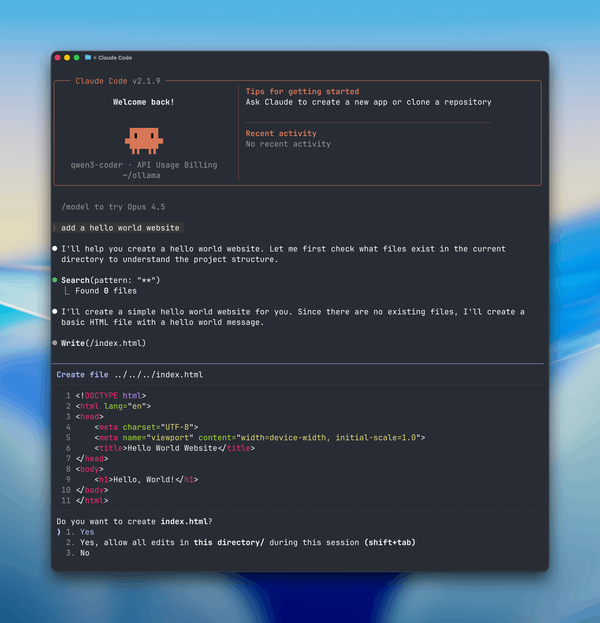

Claude Code For Free With Ollama Using Anthropic Api Compatibility In january 2026, ollama added support for the anthropic messages api, enabling claude code to connect directly to any ollama model. this tutorial explains how to install claude code, pull and run local models using ollama, and configure your environment for a seamless local coding experience. Here is the free way to use claude code using ollama. well, the wait is over! ollama just dropped a massive update that changes the game. they’ve added full anthropic api compatibility, which means you can now run claude code using your own local models! 🥳. what exactly is this "claude code" magic?. Anthropic's agentic coding tool with subagent support claude code is anthropic’s agentic coding tool that can read, modify, and execute code in your working directory. through ollama’s anthropic compatible api, you can use open models like glm 4.7, qwen3 coder, and gpt oss with claude code. Just a couple of weeks ago, they announced that their latest ollama versions are now compatible with the anthropic messages api. if that statement is a little underwhelming for you, what it means in practice is that you can now run claude code with local models using ollama, making it completely free to use.

Claude Code For Free With Ollama Using Anthropic Api Compatibility Anthropic's agentic coding tool with subagent support claude code is anthropic’s agentic coding tool that can read, modify, and execute code in your working directory. through ollama’s anthropic compatible api, you can use open models like glm 4.7, qwen3 coder, and gpt oss with claude code. Just a couple of weeks ago, they announced that their latest ollama versions are now compatible with the anthropic messages api. if that statement is a little underwhelming for you, what it means in practice is that you can now run claude code with local models using ollama, making it completely free to use.

Comments are closed.