Distributed Ai Clusters Insights Into Multi Data Center Connectivity And Edge Inference

Ai Data Center Powering Business Innovation Edge Nebula At hpe, we combine unified data, ai, and edge to cloud expertise with deep collaboration to bring transformative solutions to life. This post explains how nvidia spectrum xgs ethernet technology for scale across networking enables inter data center connectivity with the high performance needed for ai.

Ai On The Edge Can Distributed Computing Disrupt The Data Center Boom Distributed ai clusters: insights into multi data center connectivity and edge inference. the rise of ai training and edge inference is redefining network design. to. To deliver intelligence at scale, networks must evolve for modern ai workloads. this session explores centralized ai training with high performance data center interconnects and distributed inference using hpe juniper platforms for scalable, resilient ai native networking. Our paper, “rdma over ethernet for distributed ai training at meta scale,” provides the details on how we design, implement, and operate one of the world’s largest ai networks at scale. When many people think of distributed data centers, they imagine multiple large facilities connected for backup and failover. that model is still relevant, but it doesn't capture the full.

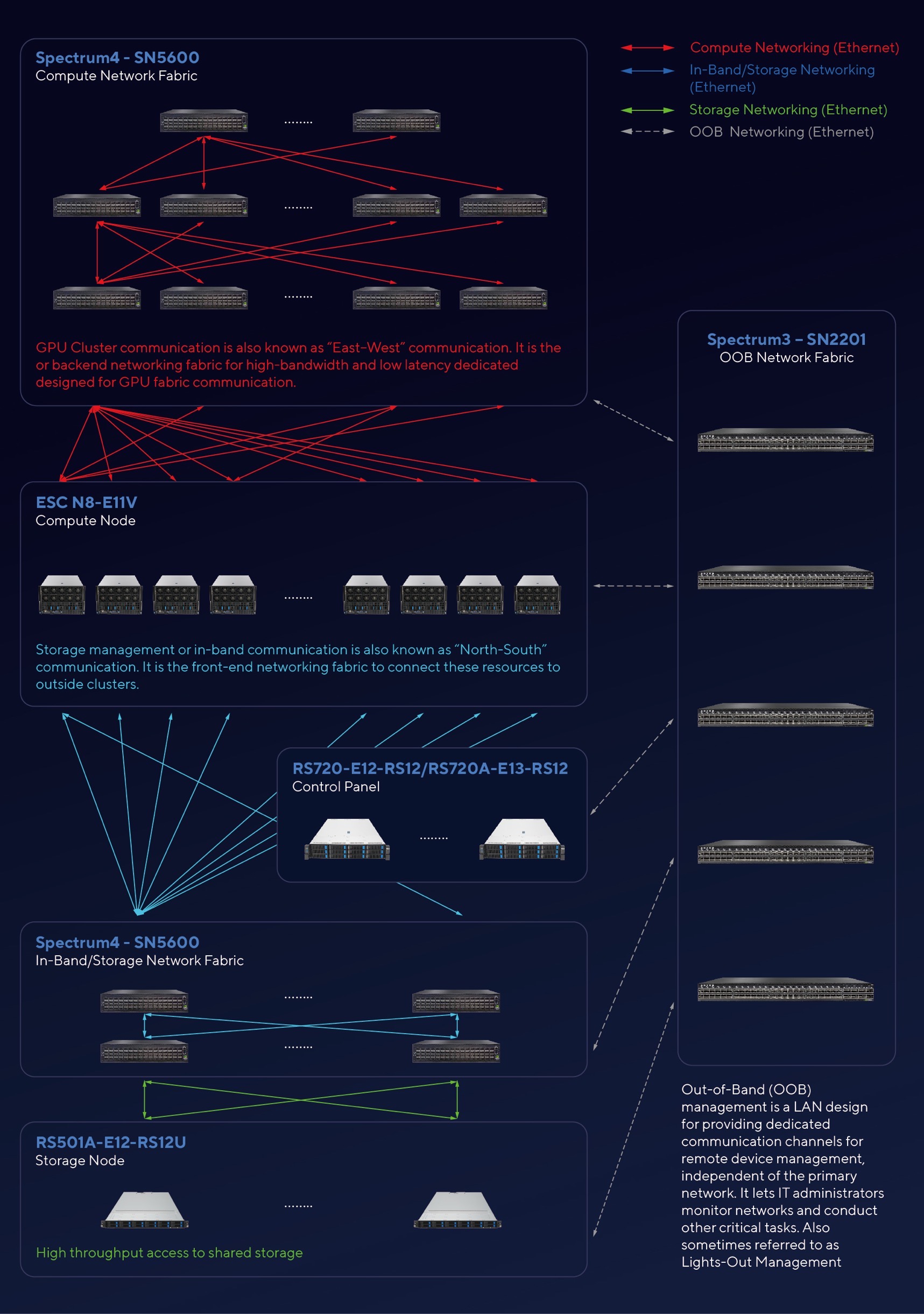

Designing Ai Clusters Network Infrastructure For Efficient Data Center Our paper, “rdma over ethernet for distributed ai training at meta scale,” provides the details on how we design, implement, and operate one of the world’s largest ai networks at scale. When many people think of distributed data centers, they imagine multiple large facilities connected for backup and failover. that model is still relevant, but it doesn't capture the full. A new paradigm is emerging: distributed, multi site gpu clusters that bring computation to the edge, where data originates and where decisions must be made in milliseconds, not seconds. Through a combination of ambitious self build plans, aggressive leasing, large partnership and innovative ultra dense designs, microsoft will lead the ai training market by scale, with multiple gw scale clusters. We highlight primeintellect as the principal open source sw training infrastructure targeting this multi datacenter framework. connecting multiple nearby data centers, in the same region, into one virtual cluster can extend the compute and memory resources available for a single training job. This exploration dives into the philosophy behind distributed ai inference, the tangible benefits of ai clusters, and the emerging frontier of mobile neural processing units (npus) that promise to extend intelligent computing to the edge of our networks.

Comments are closed.