How To Run A Local Llm Complete Guide To Setup Best Models 2025

7 Best Llm Tools To Run Models Locally February 2026 Unite Ai How to run a local llm: complete guide to setup & best models (2025) learn how to run llms locally, explore top tools like ollama & gpt4all, and integrate them with n8n for private, cost effective ai workflows. This comprehensive guide explores the latest methods, hardware requirements, and best practices for running local llms in 2025, incorporating the most recent developments in model optimization, quantization techniques, and deployment tools.

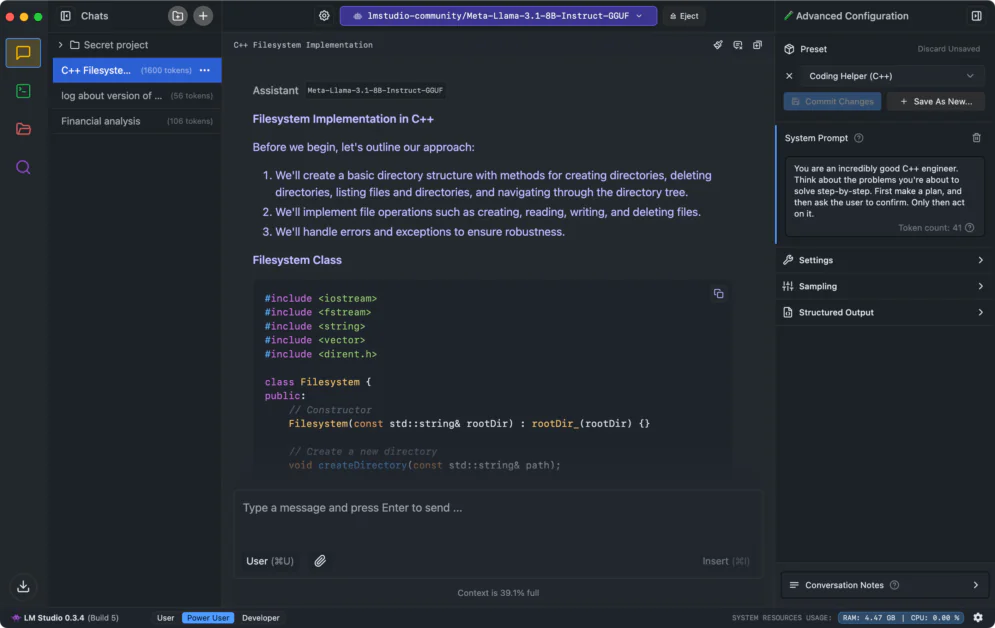

7 Best Llm Tools To Run Models Locally November 2025 Unite Ai In this guide, we’ll explore how to run an llm locally, covering hardware requirements, installation steps, model selection, and optimization techniques. whether you’re a researcher, developer, or ai enthusiast, this guide will help you set up and deploy an llm on your local machine efficiently. For anyone running llms locally, here's what matters: when evaluating whether a machine is suitable for llm workloads, prioritize memory bandwidth as your primary decision criterion. This guide by inteligenai walks you through every step, from hardware selection to security best practices, based on commonly reported benchmarks and practices from the local llm community. Run ai locally in 2025 — power, privacy, and performance at your fingertips. in 2025, developers are finding that running large language models locally isn’t just possible—it’s practical, fast, and fun. no more cloud costs, no privacy trade offs, and no waiting on someone else’s server.

7 Best Llm Tools To Run Models Locally November 2025 Unite Ai This guide by inteligenai walks you through every step, from hardware selection to security best practices, based on commonly reported benchmarks and practices from the local llm community. Run ai locally in 2025 — power, privacy, and performance at your fingertips. in 2025, developers are finding that running large language models locally isn’t just possible—it’s practical, fast, and fun. no more cloud costs, no privacy trade offs, and no waiting on someone else’s server. Learn how to set up your local llm effectively for peak performance. optimize your system and enhance productivity. read more to get started!. Learn how to install, configure, and optimize ollama for running ai models locally. complete guide with setup instructions, best practices, and troubleshooting tips. Running large language models (llms) locally has become one of the hottest ai trends of 2025. developers, researchers, and even hobbyists are no longer limited to cloud apis — thanks to innovations like llama 2, mistral, llama.cpp, and ollama, you can run cutting edge ai directly on your machine. We’ll show seven ways to run llms locally with gpu acceleration on windows 11, but the methods we cover also work on macos and linux. if you want to learn about llms from scratch, a good place to start is this course on large learning models (llms).

Comments are closed.