Llm At Home Trying Starchat 16b Large Language By Teemu Kanstren

Large Language Model Llm Primo Ai Building (training) an entire llm model requires quite massive resources, but the opportunity to use these available (pretrained) models is very interesting and requires fewer resources. in this. Trying starchat 16b large language model on a pclarge language models (llms) are the greatest hype recently. it started with chatgpt, but now there are numerous open source or openly available (open access?) models to try locally. most of them are on hugging face.

Large Language Model Llm Primo Ai Starchat β is trained on an "uncensored" variant of the openassistant guanaco dataset. we applied the same recipe used to filter the sharegpt datasets behind the wizardlm. Google's service, offered free of charge, instantly translates words, phrases, and web pages between english and over 100 other languages. Large language models (llms) small enough to run locally on your home pc or laptop can be incredibly useful tools. the latest models on the smaller side of the spectrum are smart, but also run smoothly on a wide range of computer hardware. In build a large language model (from scratch), you'll learn and understand how large language models (llms) work from the inside out by coding them from the ground up, step by step.

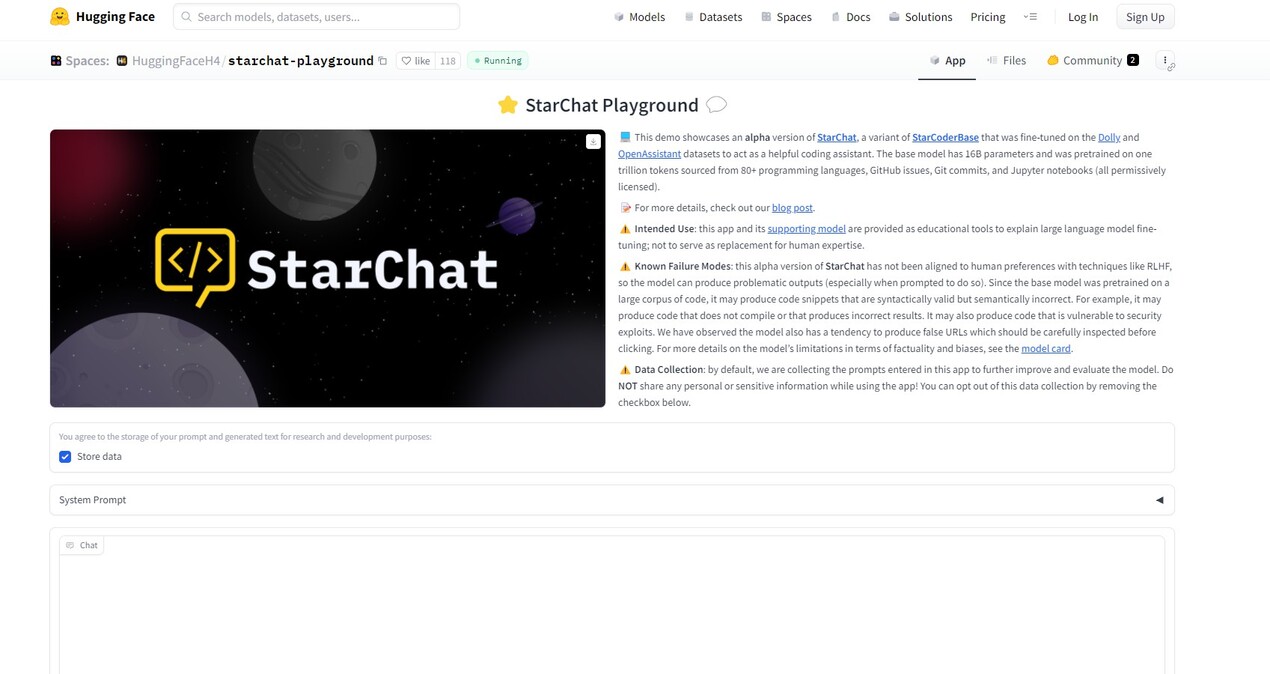

Starchat Reviews Features Pricing And Alternatives Aitoolnet Large language models (llms) small enough to run locally on your home pc or laptop can be incredibly useful tools. the latest models on the smaller side of the spectrum are smart, but also run smoothly on a wide range of computer hardware. In build a large language model (from scratch), you'll learn and understand how large language models (llms) work from the inside out by coding them from the ground up, step by step. Running large language models (llms) locally with tools like lm studio or ollama has many advantages, including privacy, lower costs, and offline availability. however, these models can be resource intensive and require proper optimization to run efficiently. Huggingface is developing a new series of large language models and the first one is called starchat alpha. this model is a 16b parameter gpt like language model that has been fine tuned on a combination of the oasst1 and databricks dolly 15k datasets. Large language models (llms) have transformed ai — but relying on cloud services for every interaction may raise concerns around privacy, cost, and latency. the good news? today’s tools let you run powerful llms entirely on your local machine — from chat assistants to code generators — even on a laptop!. I'll patiently wait for a smaller sized quant of this model to mess with at home, but the demo seemed pretty coherent. while i didn't actually test the code it provided, most local coding models i have tried immediately break down and you can tell the code won't work just at a glance.

Large Language Model Llm Trends Running large language models (llms) locally with tools like lm studio or ollama has many advantages, including privacy, lower costs, and offline availability. however, these models can be resource intensive and require proper optimization to run efficiently. Huggingface is developing a new series of large language models and the first one is called starchat alpha. this model is a 16b parameter gpt like language model that has been fine tuned on a combination of the oasst1 and databricks dolly 15k datasets. Large language models (llms) have transformed ai — but relying on cloud services for every interaction may raise concerns around privacy, cost, and latency. the good news? today’s tools let you run powerful llms entirely on your local machine — from chat assistants to code generators — even on a laptop!. I'll patiently wait for a smaller sized quant of this model to mess with at home, but the demo seemed pretty coherent. while i didn't actually test the code it provided, most local coding models i have tried immediately break down and you can tell the code won't work just at a glance.

Starchat Info Pricing Guides Ai Tool Guru Large language models (llms) have transformed ai — but relying on cloud services for every interaction may raise concerns around privacy, cost, and latency. the good news? today’s tools let you run powerful llms entirely on your local machine — from chat assistants to code generators — even on a laptop!. I'll patiently wait for a smaller sized quant of this model to mess with at home, but the demo seemed pretty coherent. while i didn't actually test the code it provided, most local coding models i have tried immediately break down and you can tell the code won't work just at a glance.

Comments are closed.