Revolutionizing Code Completion With Codestral Mamba The Next Gen

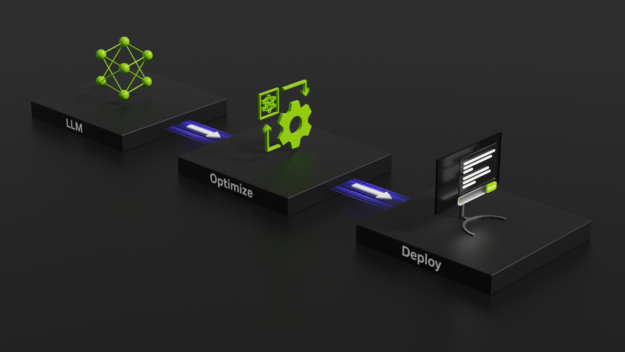

Revolutionizing Code Completion With Codestral Mamba The Next Gen This post explores the benefits of codestral mamba, highlights its mamba 2 architecture, inference optimizations supported in nvidia tensorrt llm, and the ease of deployment with nvidia nim for transformative potential and coding efficiency. The article discusses codestral mamba, an advanced coding model developed by mistral, built on the mamba 2 architecture, which enhances code completion for developers.

Revolutionizing Code Completion With Codestral Mamba The Next Gen As a tribute to cleopatra, whose glorious destiny ended in tragic snake circumstances, we are proud to release codestral mamba, a mamba2 language model specialised in code generation, available under an apache 2.0 license. Whether you’re debugging, refactoring, or writing new code, codestral mamba excels at delivering precise, context aware code outputs tailored to your needs. In the rapidly evolving field of generative ai, coding models have become indispensable tools for developers, enhancing productivity and precision in software. See how codestral mamba enhances coding productivity with its advanced features, performance benchmarks, and comparisons to top coding models—all in one guide.

Revolutionizing Code Completion With Codestral Mamba The Next Gen In the rapidly evolving field of generative ai, coding models have become indispensable tools for developers, enhancing productivity and precision in software. See how codestral mamba enhances coding productivity with its advanced features, performance benchmarks, and comparisons to top coding models—all in one guide. Mistral ai has announced the release of its latest model codestral mamba 7b. this new model is based on the advanced mamba 2 architecture, trained with a context length of 256k tokens, and is built for code generation tasks for developers worldwide. Codestral mamba, leveraging the mamba state space model, addresses limitations of traditional transformer models in handling long code sequences. its open source nature, multilingual support, and ability to process large contexts position it as a valuable alternative for developers. In the rapidly evolving field of generative ai, coding models have become indispensable tools for developers, enhancing productivity and precision in software development. Codestral mamba’s innovative architecture and impressive performance herald a new era in code generation. its ability to achieve transformer level accuracy while maintaining linear time inference and a theoretically infinite context window makes it a powerful tool for developers.

Revolutionizing Code Completion With Codestral Mamba The Next Gen Mistral ai has announced the release of its latest model codestral mamba 7b. this new model is based on the advanced mamba 2 architecture, trained with a context length of 256k tokens, and is built for code generation tasks for developers worldwide. Codestral mamba, leveraging the mamba state space model, addresses limitations of traditional transformer models in handling long code sequences. its open source nature, multilingual support, and ability to process large contexts position it as a valuable alternative for developers. In the rapidly evolving field of generative ai, coding models have become indispensable tools for developers, enhancing productivity and precision in software development. Codestral mamba’s innovative architecture and impressive performance herald a new era in code generation. its ability to achieve transformer level accuracy while maintaining linear time inference and a theoretically infinite context window makes it a powerful tool for developers.

Revolutionizing Code Completion With Codestral Mamba The Next Gen In the rapidly evolving field of generative ai, coding models have become indispensable tools for developers, enhancing productivity and precision in software development. Codestral mamba’s innovative architecture and impressive performance herald a new era in code generation. its ability to achieve transformer level accuracy while maintaining linear time inference and a theoretically infinite context window makes it a powerful tool for developers.

Comments are closed.