Run An Llm Locally With Lm Studio Kdnuggets

How To Run Llm Locally On Your Computer With Lm Studio Hongkiat Ever wanted to run an llm on your computer? you can do so now, with the free and powerful lm studio. Run local ai models like gpt oss, llama, gemma, qwen, and deepseek privately on your computer.

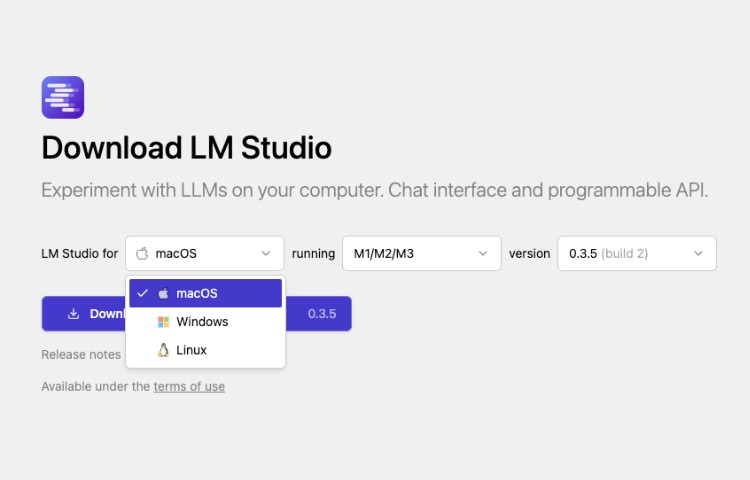

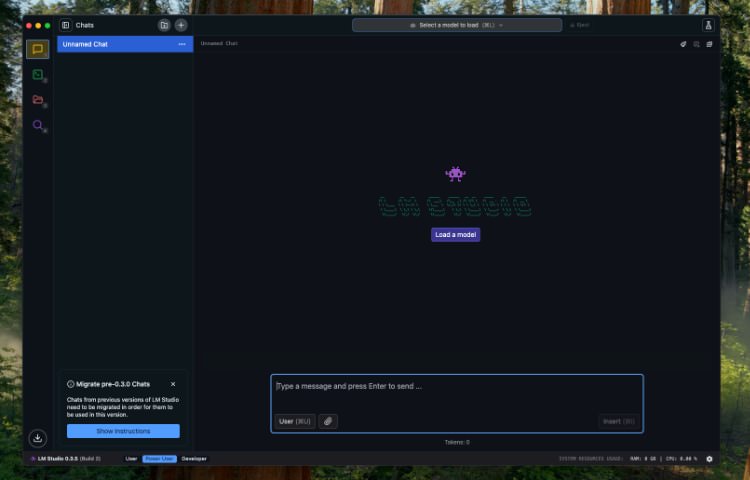

How To Run Llm Locally On Your Computer With Lm Studio Hongkiat In this post, we will discuss five ways to use large language models (llms) locally. most of the software is compatible with all major operating systems and can be easily downloaded and installed for immediate use. by using llms on your laptop, you have the freedom to choose your own model. Lm studio attempts to bridge this gap. but does it deliver? let’s dive deep. what is lm studio? lm studio is a desktop application for running large language models (llms) locally. This guide will show you how to set up an llm using lm studio. i’ll also highlight some popular cpu setups to match your hardware and throw in a quick comparison of top models—including the deepseek r1 distilled series. Lm studio is a performant and friendly desktop application for running large language models (llms) on local hardware. this guide will walk you through how to set up and run gpt oss 20b or gpt oss 120b models using lm studio, including how to chat with them, use mcp servers, or interact with the models through lm studio’s local development api.

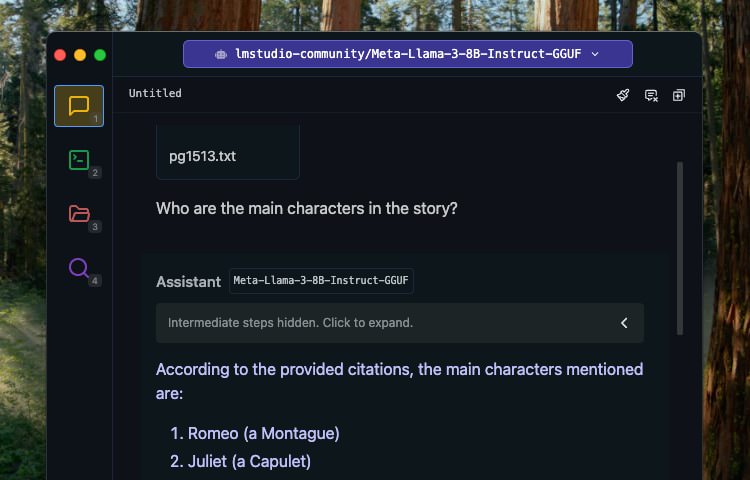

How To Run Llm Locally On Your Computer With Lm Studio Hongkiat This guide will show you how to set up an llm using lm studio. i’ll also highlight some popular cpu setups to match your hardware and throw in a quick comparison of top models—including the deepseek r1 distilled series. Lm studio is a performant and friendly desktop application for running large language models (llms) on local hardware. this guide will walk you through how to set up and run gpt oss 20b or gpt oss 120b models using lm studio, including how to chat with them, use mcp servers, or interact with the models through lm studio’s local development api. This guide walks you through the complete process of setting up a production grade local llm on an apple silicon mac (m4 macbook air with 16gb ram), from initial setup to network accessible deployment. Learn how to install, configure, and use lm studio to run large language models locally. this step by step guide is tailored for developers and api teams, with practical integration tips and workflow enhancements using apidog. In this video, you'll learn how to use lm studio to download, run, and interact with large language models (llms) locally on your machine. 💡 what you'll learn: • what lm studio is and why it. If you're building other tools or want to access your llm remotely, lm studio can act as a local server. enable the api server from the settings menu and call it from browser extensions or local scripts.

How To Run Llm Locally On Your Computer With Lm Studio Hongkiat This guide walks you through the complete process of setting up a production grade local llm on an apple silicon mac (m4 macbook air with 16gb ram), from initial setup to network accessible deployment. Learn how to install, configure, and use lm studio to run large language models locally. this step by step guide is tailored for developers and api teams, with practical integration tips and workflow enhancements using apidog. In this video, you'll learn how to use lm studio to download, run, and interact with large language models (llms) locally on your machine. 💡 what you'll learn: • what lm studio is and why it. If you're building other tools or want to access your llm remotely, lm studio can act as a local server. enable the api server from the settings menu and call it from browser extensions or local scripts.

Comments are closed.